|

Search Engine Watch sister site ClickZ has just launched the first report in its new series of buyers guides, which aims to to disentangle and demystify the martech landscape for marketers. The guide, which focuses on bid management tools, covers a range of market leading vendors and draws on months of research and more than 1,600 customer reviews. This will be the first in a series of guides created using the collective knowledge of the ClickZ and Search Engine Watch communities to help our readers arrive at more informed technology decisions. The modern martech landscape is complex and competitive, making it difficult for marketers to cut through the noise and select the right technology partners. Our buyers guides are created with the objective of providing a clear view on the areas in which vendors excel, in order to allow our readers to establish successful relationships with the most suitable platforms. What sets our guides apart is the use of a customer survey to hear directly from current clients of each software package. For the bid management tools guide, we received more than 1,600 survey responses, which has provided a wealth of valuable data across our six assessment categories.

Graphs in the report are interactive to allow comparison.The series of guides begins with bid management tools because of the importance these technologies hold in the modern martech stack. Along with deriving maximum value from the $92 billion spent annually on paid search worldwide, these platforms also help marketers manage their display advertising and social budgets, with some even providing support for programmatic TV buying. This creates a varied landscape of vendors, with some focusing on the core channels of Google and Facebook, and others placing bets on the potential of the likes of Amazon to provide a real, third option for digital ad dollars. Though the vendors we analyzed share much in common, there are subtle distinctions within each that make them suitable for different needs. A combination of customer surveys, vendor interviews, and expert opinion from industry veterans has helped us to draw out these nuances to create a transparent view of the current market. Within the guide, you will gain access to:

Follow this link to download the Bid Management Tools Buyers Guide on Search Engine Watch.

from https://searchenginewatch.com/2018/02/28/mystified-by-martech-introducing-the-clickz-buyers-guide-series/

0 Comments

With local search proven to be one of the hottest SEO trends of 2017, it is projected to maintain its standing among make-or-break optimization factors in 2018. The competition between online and brick-and-mortar stores is heating up, and local search optimization can become a decisive factor in how a site ranks locally and, consequently, in how much traffic and clients it drives from local, on-the-go searches. Fortunately, major local search tactics are not that hard to master. Follow the six steps below to achieve the best results in terms of SERPs, traffic, and conversions on the local battlefield. Claim Google My BusinessFailure to claim your company’s account at Google My Business may be the reason your website does not show up at the top spot of Google’s local search results. If you are not there (and Bing Places for Business), you are missing out on incredible opportunities to drive local traffic. With Google’s local three-pack considered to be the coveted spot for every local business, you need to please the Google gods to get listed there:

According to Google My Business guidelines, any business can be unlisted if they violate any of the following rules:

Register with online directories and listingsAccording to a Local Search Ranking Factors Study 2017 by Moz, link signals play a key role in how sites rank in local search. However, many website owners pay zero attention to online directories and listings, which are a safe source of relevant, high-quality links. The process here is simple:

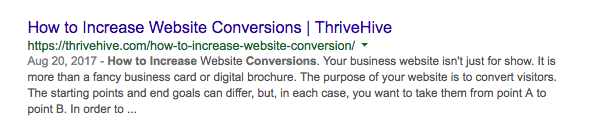

Bonus tip! Like directories and listings, citation data aggregators feed search engines with crucial bits of information about your business, such as your business name, address and phone number (NAP). Ensure that all information you submit to CDAs is consistent. Do not confuse your customers and Google. Optimize titles and meta descriptionsTitles and meta descriptions are still a biggie in local search. Customizable HTML elements act as ads that define how a page’s content is reflected in search results, and they have to be catchy enough to get clicked. Since titles and meta descriptions are limited to ~50+ and ~160+ characters, they may pose a challenge. These tips should help:

What it comes down to is this: Even if your business gets a coveted No.1 position in local search thanks to all of your SEO efforts, you still have to incentivize users to click on your link. Masterfully crafted and meaningful titles and meta descriptions can make a big difference. Collect and manage online reviewsAccording to BrightLocal’s 2017 Local Consumer Review Survey, 97% of consumers read online reviews for local businesses, with 85% trusting them as much as personal recommendations. Since reviews can become your ultimate weapon for building trust and a positive reputation among your targeted audience, it makes sense to ask for them. As of 2017, 68% of consumers are willing to leave a review when asked by the business (70% in 2016). So where do you start? Implement this simple process to manage your reviews:

Bonus tip! Since consumers read an average of seven reviews before trusting a business, develop a strategy for generating ongoing positive reviews. Make sure to contact happy customers and ask for their reviews to mitigate the effect of negative reviews. Use local structured data markupSchema markup, a code used for marking up crucial bits of data on a page to assist search engine spiders in determining a page’s contents, is one of the most powerful but least-utilized SEO methods. With ~10 million websites implementing Schema.org markup, you should start using this leverage against your competition. However, structured data is not simple to master. As of 2017, Schema’s core vocabulary consists of 597 Types, 867 Properties, and 114 Enumeration values. The good news is that Google has developed several tools to help business owners, marketers, and SEO professionals: Bonus tip! Make LocalBusiness schema your top priority. Particularly, discover specific Types for different businesses below the list of properties. Appear in local publications and mediaOn the link-building side of things, content is your most powerful weapon. Reach out to local publications, media sites, and bloggers to serve up content that soothes the pain points of local consumers. You will not only get coverage and reach new audiences, but you will also garner relevant backlinks that push your site up in local searches. Follow this process to amplify your linkbuilding efforts through content marketing:

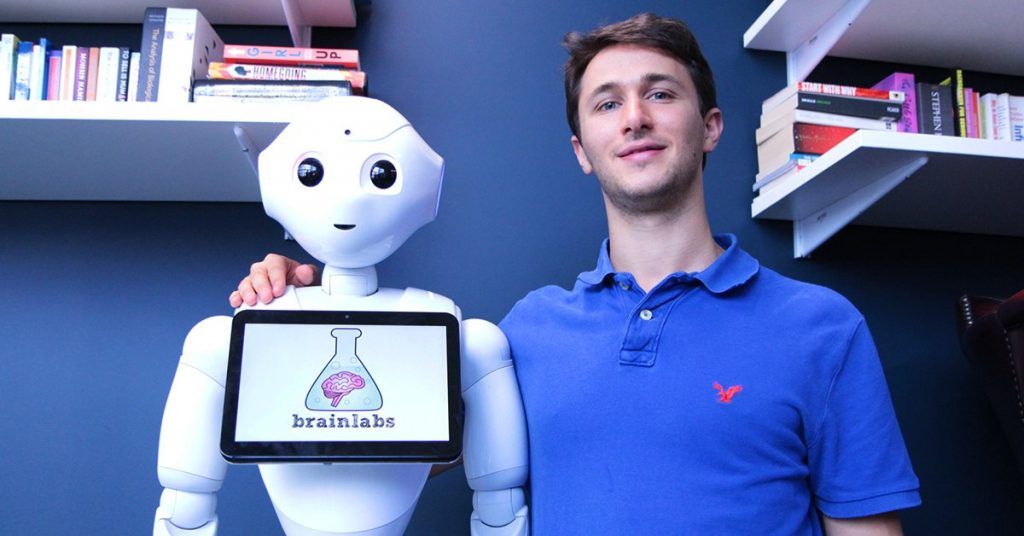

Bonus tip! Consider cooperating with other local businesses to build powerful content. Reach out to your partners to research ideas and create content with meat on its bones. Otherwise, you may fall short of beating out competition from national-level players. ConclusionSEO changes all the time, and local search is not much different. However, the six steps above will provide a solid bedrock for your local SEO strategy. Implement these tactics, and you will outperform your competition in local search results. from https://searchenginewatch.com/2018/02/28/six-steps-to-improving-your-local-search-strategy/ On February 22nd, leading digital media agency Brainlabs hosted the latest in its series of PPC Chat Live events at its London HQ. With speakers from Google, Verve Search, and of course from Brainlabs too, there were plenty of talking points to consider and digest. In this article, we recap the highlights from an enlightening event. The theme for this edition of PPC Chat Live was ‘the state of search’, with the focus squarely on the trends set to shape the industry in 2018 and beyond. The speakers delivered a wide variety of presentations that reflected on the industry’s beginnings, not just for nostalgia’s sake but also to illuminate the future too. Brainlabs has carved out a position as an innovative, data-driven search agency and this tone was carried through the evening, all ably assisted by Pepper the robot receptionist.

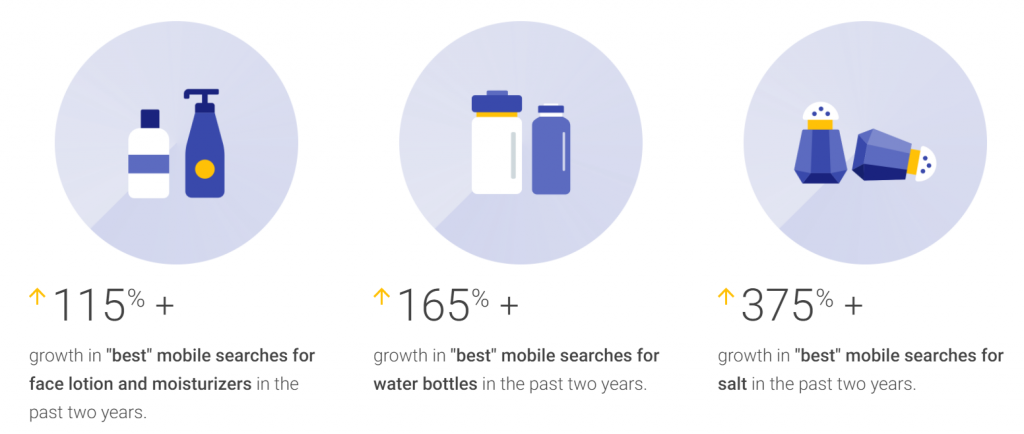

Although paid search took up the majority of air time, there was still plentiful room for ruminations on the evolving role of SEO and what the nature of search tells us about the modern consumer. Digital assistants: empowering or simply enabling?Peter Giles from Google opened the evening with a thought-provoking talk on the impact of new technologies on the way people find information. Peter noted that the increased accuracy of voice-enabled digital assistants has led to a range of changes in consumer behavior. Some of these could be seen as empowering, while others perhaps play only to our innate laziness and desire for a friction-free life. There were three core behavioral trends noted within this session: Increased curiosityBecause people have access to an unprecedented amount of information, they are more inclined to ask questions. When the answers are always close to hand, this is an understandable development. Google has seen some interesting trends over the past two years, including an increase of 150% in search volume for [best umbrellas]. What was once a simple purchase is now subject to a more discerning research process.

Higher expectationsAlthough there is initial resistance to some technologies that fundamentally change how we live, once we are accustomed to them we quickly start to expect more. In 2015, Google reported that it had seen a 37x increase in the number of searches including the phrase “near me”. Consumers now expect their device to know this intent implicitly and Peter revealed that the growth in “near me” phrases has slowed considerably. Decreased patienceAs expectations grow, patience levels decrease. In fact, there has been an increase of over 200% in searches containing the phrase “open now” since 2015 in the US. Meanwhile, consumers are coming to expect same-day delivery as standard in major metropolitan areas.

Throughout all of these changes, Peter Giles made clear that brands need to focus on being the most helpful, available option for their target audience. By honing in on these areas, the ways in which consumers access the information are not so important. The more significant factor is making this information easy to locate and to surface, whether through search engines, social networks, or digital assistants. The past, present, and future of PPC and SEOBrainlabs’ exec chair Jim Brigden reflected on the history of the paid search industry, going back to the early 2000’s when most brands were skeptical of the fledgling ad format’s potential. In fact, only £5 million was spent on paid search in the UK as recently as 2001. The industry’s growth, projected to exceed $100 billion globally this year, should also give us reason to pause and consider what will happen next. The pace of change is increasing, so marketers need to be able to adapt to new realities all the time. Jim Brigden’s advice to budding search marketers was to absorb as much new knowledge as possible and remain open to new opportunities, rather than trying to position oneself based on speculation around future trends. Many marketers have specialized in search for well over a decade and, while the industry may have changed dramatically in that time, its core elements remain largely intact. This was a topic touched on by Lisa Myers of Verve Search too, when discussing organic search. For many years, we have discussed the role (and even potential demise) of SEO, as Google moves to foreground paid search to an ever greater degree. Myers’ presentation showcased just how much the SEO industry has changed, from link buying to infographics, through to the modern approach that has as much in common with a creative agency as it does with a web development team. Just one highlight from the team at Verve Search, carried out in collaboration with their client Expedia, was the Unknown Tourism campaign. Comprised of a range of digital posters, the campaign commemorates animals that have been lost from some of the world’s most popular tourist spots.

Such was the popularity of the campaign, one fan created a package for The Sims video game to make it possible to pin the posters on their computer-generated walls. Verve has received almost endless requests to create and sell the posters, too. This isn’t what most people think of when they think of SEO, but it is a perfect example of how creative campaigns can drive performance. For Expedia, Verve has achieved an average increase in visibility of 54% across all international markets. The core lesson we can take away here from both Jim Brigden and Lisa Myers is that the medium of search remains hugely popular and there is therefore a need for brands to try and stand out to get to the top. The means of doing so may change, but the underlying concepts and objectives remain the same. The predictable nature of peopleFor the final part of the evening, Jim Brigden was joined by Dan Gilbert, CEO of Brainlabs and the third most influential person in digital, according to Econsultancy. Dan shared his sophisticated and elucidative perspective on the search industry, which is inextricably linked to the intrinsic nature of people. A variety of studies have shown that people’s behavioral patterns are almost entirely predictable, with one paper noting that “Spontaneous individuals are largely absent from the population. Despite the significant differences in travel patterns, we found that most people are equally predictable.” As irrational and unique as we would like to think we are, most of our actions can be reduced to mathematical equations. That matters for search, when we consider the current state of the industry. After all, companies like Google excel at creating rational systems, such as the machine learning algorithms that continue to grow in prominence across its product suite. As Dan Gilbert stated, this gives good cause to believe that the nature of search will be fundamentally different in the future. Our digital assistants will have little reason to offer us a choice, if they already know what we want next. That choice is the hallmark of the search industry, but Gilbert sees no reason to create a monetizable tension where no tension needs to exist. Google’s focus has always been on getting the product right and figuring out the commercial aspect once users are on board and this seems likely to be the approach with voice-enabled assistants.

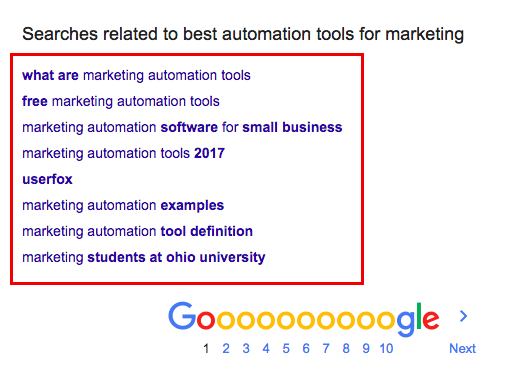

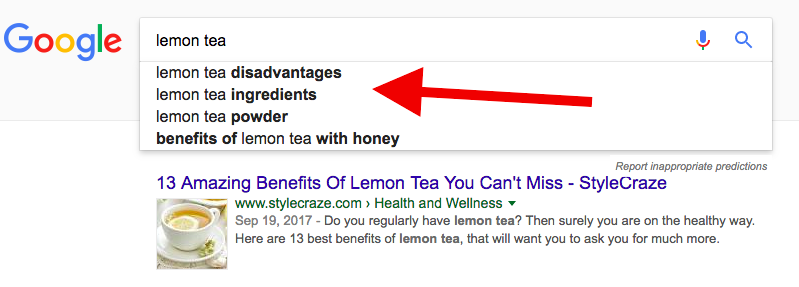

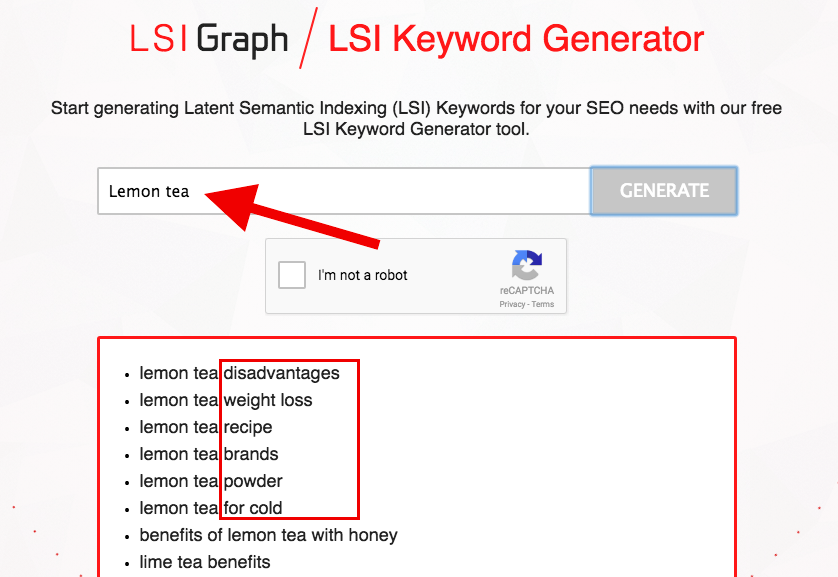

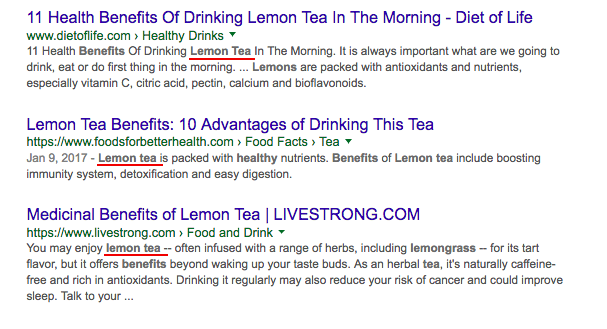

In fact, the technology is already available to preempt these decisions and start serving consumers content and products before they even know they want to receive them. The field of predictive analytics has evolved significantly over the last few years and the capability to model out future behavioral trends is already in use for companies like Netflix and Amazon. The inflection point for this technology is dependent on people’s readiness to accept such a level of intrusion in their daily lives, rather than any innate technological shortcomings. History suggests that, while a certain initial resistance is to be expected, ultimately we will grow accustomed to this assimilation of technology into our lives. And, soon after, we will grow impatient with any limitations we encounter. That will create a seismic shift in how the search industry operates, but it will open up new and more innovative ways to connect consumers with brands. from https://searchenginewatch.com/2018/02/27/event-recap-state-of-search-with-brainlabs/ Google’s RankBrain is an algorithm that uses machine learning and artificial intelligence to rank results based on feedback from searcher intent and user experience. Diving deep to learn what makes RankBrain tick, here are 15 actionable tips to improve your SEO rankings. Optimizing keyword researchKeywords have long been the foundation to high-ranking SEO content. Most of your content, whether it be blogs or website copy, begin with hours of definitive keyword research you could rank for, and outrank your competitors for. However, RankBrain has in some ways changed those run-of-the-mill SEO keyword research strategies you may have used in the past. It is all about searcher intent when it comes to the future of ranking, so it’s time to adjust your keyword research strategy. 1. Rethink synonymous long-tail keywordsLong-tail keywords were once effective, before Google used semantic analysis and understood the meaning of words. You were able to compile a list of long-tail keywords from Google’s “related searches” at the bottom of a SERP and create a page for each keyword. Unfortunately, synonymous long-tail keywords are not as effective in the RankBrain SEO world. RankBrain’s algorithm is actually quite intelligent when it comes to differentiating very similar long-tail keywords. Instead of ranking for multiple keywords, it will deliver pretty much the same results to a user. For example, the long-tail keywords, “best automation tools for marketing” and “best marketing automation tools” will return the same results to satisfy searcher intent. So what do you do instead of leveraging those long-tail keywords? Read on to find out how to do RankBrain-minded keyword research. 2. Leverage medium-sized keywordsSince long-tail keywords are on their way out, you should begin optimizing for root keywords instead. Root keywords are the middle of the pack search terms with higher search volume than long-tail. They are more competitive and may require more links and quality content to rank. For example, let’s say you are crafting an article with “lemon tea” as your primary keyword. Your keyword research should look something like: You’ll notice that there are a number of medium sized keywords to choose from. Interestingly, the primary keyword “lemon tea” only nets about 3,600 monthly searches. However, medium sized keywords like, “benefits of lemon” and “honey lemon” drive around 8,000 to 9,000 monthly searches. Using medium sized keywords in the RankBrain SEO world will also automatically rank your content for a number of other related keywords. If you want to optimize your content for highest SERP position possible, use medium-tail keywords. 3. Add more LSI keywordsNow before you toss out the idea of long-tail keywords altogether, it is important to understand that they still have some benefits. For instance, they help you identify the LSI keywords RankBrain loves to rank content for. This doesn’t mean you should keep using those synonymous long-tail keywords, but you should leverage the LSI potential of them. These would be any words or phrases very strongly associated with your topic. Using the previous “lemon tea” example, you can easily identify a number of excellent LSI keywords for that primary keyword. How? Good question! One way is to use Google’s “related searches” and note any words in bold blue. You can also use Google’s drop down to help you identify commonly searched for LSI keywords. You can also use a handy little LSI keyword finder tool called LSI Graph: Simply type in your primary keyword and LSI Graph will return a number of LSI Keyword rich phrases for you to choose. Between Google and LSI Graph you can compile a number of powerful SEO LSI keywords like:

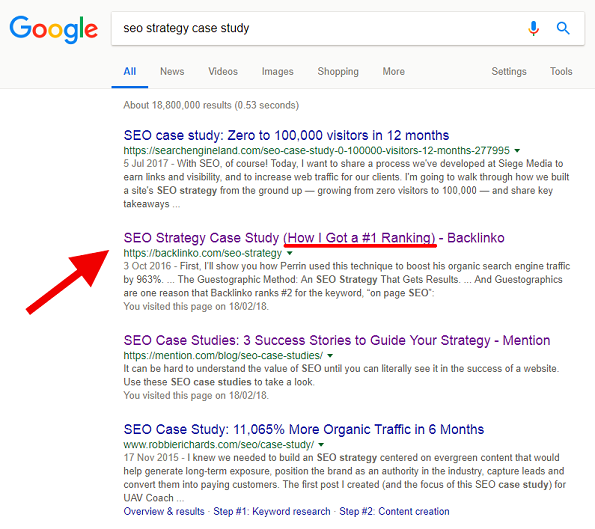

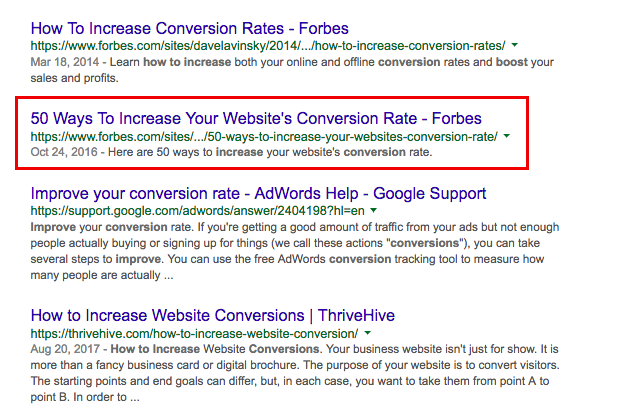

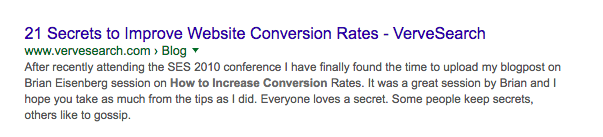

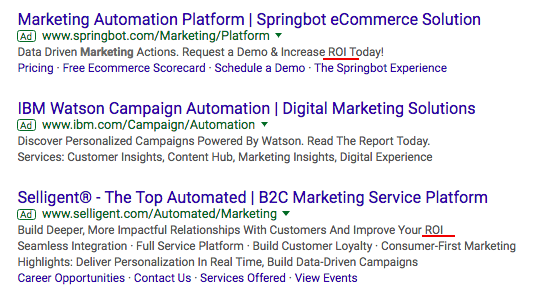

These LSI keywords will give you more keywords, and pages, to rank for, as long as they are not synonymous like in the case of those traditional long-tail keywords. Optimizing title tags for higher CTROrganic click-through-rate (CTR) is a major signaling factor. In fact, the very nature of RankBrain is all about how users interact with the content provided in the SERPs. Want to improve your SEO rankings? First improve your CTR! What can you do to ensure your content is netting the CTR it deserves in order to get ranked accordingly? Well, there are actually a number of CTR hacks to improve your SEO rankings. 4. Make your title tags emotionalPutting a little emotion into your title tags can have a big impact on your CTR. Most searchers will land on page one of Google and start scrolling through titles until one hits home emotionally. In fact, a study by CoSchedule found that an emotional score of 40 gets around 1,000 more shares. Emotional score, what is that? CoSchedule actually has a Headline Analyzer to ensure your blog titles, email subject lines, and social posts are appealing to your audience’s emotions. Drawing from our “lemon tea” example, let’s say you type, “7 Lemon Tea Benefits” into the Headline Analyzer. Not a great title, and these were the results from the analyzer tool. A score of 34 will get me near 1,000 shares, but it could definitely be better. The title definitely needs a bit more emotion and it needs to be longer. Let’s try it again. This time with the title tag, “7 Lemon Tea Weight Loss Benefits for Summer,” which did much better. It can be challenging to pick a title that brings about emotion. The best way to nail down the perfect title tag is to think like your audience, research other high-ranking titles like yours, and use online tools. 5. Use brackets in your titlesThis is definitely an easy one to implement to quickly improve your CTR and SEO rankings. By using brackets in your post titles, you are drawing more attention to your title among the masses in SERPs. In fact, research by HubSpot and Outbrain found that titles with brackets performed 33 percent better than titles without. This was a study that compiled 3.3 million titles, so quite a large sample. A few bracket examples you can use include, (Step-By-Step Case Study), (With Infographic), (Proven Tips from the Pros), or (How I Got X from Z). 6. Use power wordsIn the same mindset of developing more emotional title tags to increase CTR, power words are, well, powerful. They have the ability to draw searchers in and will make your headline irresistible. Power words include:

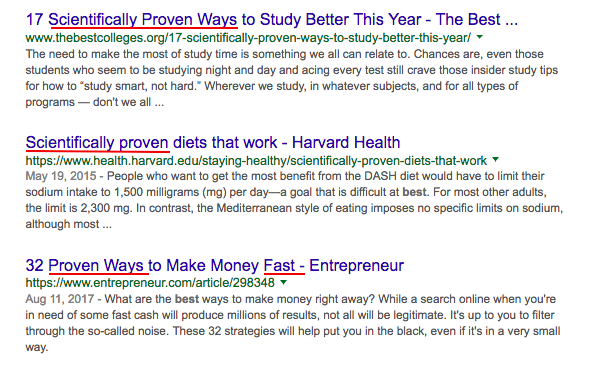

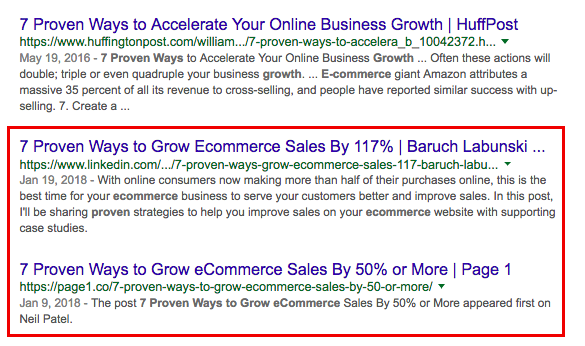

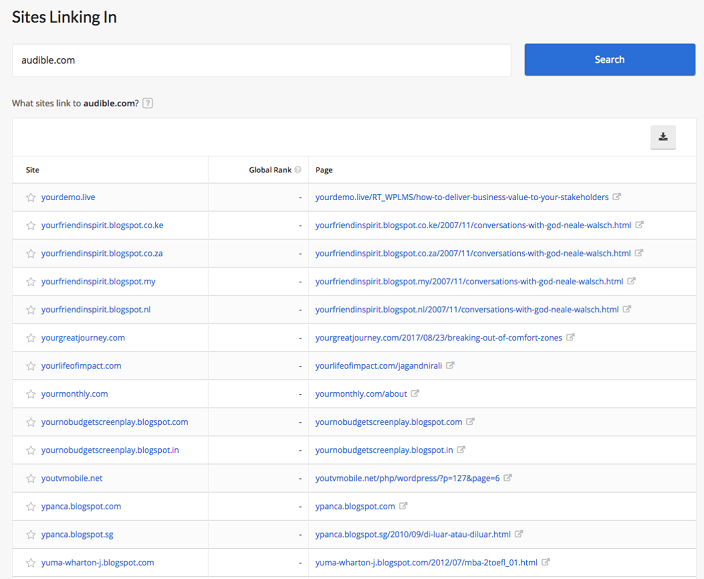

For example, these titles in page one Google take up the top three positions. Increase your CTR and improve your SEO rankings by adding power words into your post titles. But don’t forget about those numbers either. 7. Use more numbers and statistics in titlesLists are great, but don’t shy away from adding numbers and statistics to your titles. Numbers in titles highlighting percentages from research or a certain number of days can have a big impact on your content’s CTR. Your headlines could look something like these: For example, let’s say you are writing content about a new study outlining the benefits of a low glycemic diet for decreasing acne. If the study found 51 percent of participants to have decreased acne after 14-weeks, your title could be, “New Study Found a 51% Decrease in Acne after 14 Weeks.” You can also combine your numbers with power words for the perfect CTR storm. Like this: With your title tag optimization efforts well under way, it’s time to focus on those very important description tags. Optimizing description tags for higher CTRTitle tags are not the only aspect of a higher CTR to improve your SEO rankings. Someone may have stopped scrolling at your title, but may read your description tag just to be sure your content is click-worthy. This makes optimizing your description tags a priority. 8. Make your description tag emotional tooJust like your title tag, you want to keep the emotional juices flowing if a searcher reads your description tag. This can be done in a similar fashion as your title tag, using those powerful emotion words that satisfy searcher intent in a meaningful way. Here’s one example: Not exactly stirring up the emotions you would want if searching for information on how to increase your website’s conversion rates. Now how about this one: This description tag points out a problem that many business owners have, traffic but not so many conversions. And finally this description tag: This longer form approach puts conversions into emotional perspective. It is personal and has a very clear emotional call to action. 9. Highlight benefits and supporting dataWhy would anyone want to click on your content based solely on the description tag? This is the mindset that will take your CTR to the next level. Don’t be afraid to highlight the benefits or the supporting data you are serving up. This is a great example of highlighting where the content data is coming from, as well as the benefits. Creating content after an industry conference is the perfect way to highlight key takeaways for your audience. It is also makes developing those emotional description tags easy. 10. Make use of current AdWords contentOne description tag hack many people fail to leverage is using keywords and phrases placed in multiple relevant AdWords description tags. If you want to optimize your description tags for improved SEO rankings, this CTR hack is a must. For example, if your content was about marketing automation tools, you could run a quick Google search and find a number of ads. Then examine them to find recurring words or phrases, like “ROI.” This would be a pretty good indicator that you should place ROI somewhere in your description tag. After all, companies are paying thousands of dollars to have these ads up and running daily, so capitalize on their marketing investment. 11. Don’t forget your primary keywordThis should be a no-brainer, but still happens. Placing your primary keyword in your description tag solidifies that your content is indeed going to fulfill searcher intent. Like these examples: Be sure to place your primary keyword as close to the beginning of your description as possible. You can also sprinkle in a few of your LSI and power keywords as well, if it reads naturally. Reducing bounce rate and dwell timeThe RankBrain algorithm looks at your content CTR and will rank it accordingly. However, if your content isn’t quality after a user clicks on it, they will “bounce out” quickly and keep searching. This ultimately weeds out any clickbait and emphasizes the need to have a very low bounce rate and long searcher dwell time on page. The more you optimize for these two very important factors, the more your SEO rankings will improve. But what is dwell time? Well, this is how long a searcher will spend on one particular page. Like anything sales minded, you want them to stick around for a while. In fact, the average dwell time of a top 10 Google result is three minutes and ten seconds, according to a Searchmetrics study. How do you get searchers to stick around for three minutes or more? Develop quality, authoritative content that satisfies searcher intent. 12. Place content above the foldWhen someone is searching for an answer to their question on Google, they want their answer immediately. This makes having your content (introduction paragraph) above the fold crucial to keeping bounce rate low. An example of what could cause a quick “bounce out”: You’ll notice that there is no content to be found but the title tag. In fact, the brand logo takes up much of the above the fold area. This could be problematic. Instead, get your content front and center once a searcher lands on your post or page. This will showcase your introduction right from the get go, making the searcher read on. But how do you hook them? Well, highly engaging introductions. 13. Develop concise and engaging introductionsBy making your content above the fold, your introduction will be the first thing readers will see. This makes hooking them with a concise and engaging introduction essential. This will keep them reading and reduce bounce rate while increasing dwell time. There are three main elements to a powerful introduction: Hook, Transition, and Thesis. The intro hook should pull in the reader. It is specific, brief, and compelling. For example: Introduction Transitions are usually connectors. They connect the hook to the posts content and supports the title (why a searcher clicked in the first place). An introduction looks like this: The thesis of any post introduction strengthens why the reader should keep reading. Normally, if your transition is powerful, the thesis will simply fall into place. For instance: Spend some time on your introductions. These are in many ways the most important element of any content and will improve your SEO rankings in the RankBrain world. 14. Long, in-depth content ranksOne way to improve your SEO rankings is to develop longer, more in-depth content. Long-form content also increases your backlink portfolio, according to HubSpot research. More links and higher position on SERPs will definitely have an impact on your SEO rankings. If a reader makes it through your entire post, dwell time will definitely be in upwards of three minutes. More in-depth content also showcases your expertise on the topic you’re writing about. This makes you and your brand more authoritative in your industry. 15. Make content easy to digestYou know that long, in-depth content improves dwell time, ranks better, and nets more backlinks. But how do you make 2,000-plus words easy to digest for your readers? The best way to keep your readers from experiencing vertigo on page is to break up your content with lots of subheadings and actionable images. For example, you can do something like this: Subheadings are very clear and there’s an actionable image that guides readers on just “how-to” achieve the answers to their questions. Another important tip for breaking up your long-form content is to keep paragraphs very short and concise. Paragraphs can be two to three sentences long, or simply one long sentence. The main aim is to ensure readers can avoid eyestrain and take in all the authoritative information you outlined in your content. This will keep readers on page and increase your dwell time, thus improving your SEO rankings. ConclusionIf you are ready to adapt your SEO strategies to new developments in AI and the evolution of Google’s algorithms, follow the tips above to start seeing improved rankings. As algorithms evolve, so should your strategy. Have you tried optimizing for RankBrain? What are your favorite tips? from https://searchenginewatch.com/2018/02/27/15-actionable-seo-tips-to-improve-your-search-rankings/ On February 8th 2018 Google announced that, beginning in July of this year, Chrome will now be marking all HTTP sites as ‘not secure’, moving in line with Firefox, who implemented this at the beginning of 2017. This now means that the 71% of web users utilizing either browser will be greeted with a warning message when trying to access HTTP websites. Security has always been a top priority for Google. Back in 2014 they officially announced that HTTPS is a ranking factor. This was big, as Google never usually tells us outright what is or isn’t a ranking factor, for fear of people trying to game the system. In truth, every website which stores user data shouldn’t need an extra incentive to prioritize security over convenience. In a previous article for Search Engine Watch, Jessie Moore examined the benefits and drawbacks of migrating your website to HTTPS, and determined that on net, it is well worth making the move. However, if you are yet to make the switch, and nearly 50% of websites still haven’t, we’ve put together this guide to help you migrate to HTTPS. 1. Get a security certificate and install it on the serverI won’t go into detail here as this will vary depending on your hosting and server setup, but it will be documented by your service provider. Let’s Encrypt is a great free, open SSL certificate authority should you want to go down this route. 2. Update all references to prevent mixed content issuesMixed content is when the initial page is loaded over a secure HTTPS connection, but other resources such as images or scripts are loaded over an insecure HTTP connection. If left unresolved, this is a big issue, as HTTP resources weaken the entire page’s security, making it vulnerable to hacking. Updating internal resources to HTTPS should be straightforward. This can usually be done easily with a find-and replace database query, or alternatively using the upgrade-insecure-requests CSP directive, which causes the browser to request the HTTPS version of any resource called on the page. External resources, plugins and CDNs will need to be configured and tested manually to ensure they function correctly. Should issues arise with external-controlled references, you only really have three options: include the resource from another host (if available), host the content on your site directly (if you are allowed to do so) or exclude the resource altogether. 3. Update redirects on external linksAny SEO worth their salt will have this at the top of their list, but it is still incredible how often this gets missed. Failure to update redirects on external links will cause every link acquired by the domain to chain, where the redirect jumps from old structure to new, before jumping from HTTP to HTTPS with a second redirect. Each unnecessary step within a sequence of redirects allows Googlebot more of a chance to fail to pass all the ranking signals from one URL to the next. We’ve seen first-hand some of the biggest domains in the world get into issues with redirect chains and lose a spectacular amount of visibility. If you haven’t already audited your backlinks to ensure they all point to a live page within a single redirect step, you can get some big wins from this activity alone. First, make sure you have all your backlink data. Do not rely on any single tool; we tend to use a minimum of Majestic, Ahrefs and Google Search Console data. Next, run all referred pages through Screaming Frog to check the page still loads and do the following depending on the situation:

Finally, any which are working will be handled by the global HTTP to HTTPS redirect so do not require additional action. 4. Force HTTPS with redirectsAgain, this will vary wildly depending on your setup. CMS’s such as WordPress and Magento will handle this for you automatically within the admin panel. Otherwise, you may need to update your .htaccess or webconfig files with a rule redirect, but this will be well documented. One common issue we see with rule redirection is separate rules for forcing HTTPS as for forcing www. This will cause chains where first www. is added to the URL then HTTPS is forced in a second step. Ensure you update any rule redirects to point to HTTPS as the destination to prevent this issue. 5. Enable HSTSUsing redirection alone to force HTTPS can still leave the system vulnerable to downgrade attacks where hackers force the site to load an unsecure version. HTTP Strict Transport Security (HSTS) is a web server directive which forces all requests for resources to be loaded through HTTPS. You will need a valid SSL certificate, which must be valid for all subdomains. Providing you’ve do this, you’ll then need to add a line of code to your .htaccess or webconfig file. 6. Enable OCSPOnline certificate status protocol improves upon the certificate revocation list (CRL). With the CRL, browsers had to check the CRL for any issues with the server’s SSL certificate, but this meant downloading the entire list and comparing, which is both inefficient from a bandwidth and an accuracy perspective. The OCSP overcomes these inefficiencies by only querying the certificate in question, as well as allowing a grace period should the certificate have expired. 7. Add HTTP/2Hypertext transfer protocol is the set of rules used by the web which governs how messages are formatted and submitted between servers and browsers. HTTP/2 allows for significant performance increases due, in part, to the ability to process multiple requests simultaneously. For example, it is possible to send resources which the client has not requested yet, saving this in the cache which prevents network round trips and reduces latency. It is estimated that HTTP/2 sites’ load times are between 50%-70% improved on HTTP/1.1. 8. Update XML sitemaps, Canonical Tags, HREF LANG, Sitemap references in robots.txtThe above should be fairly explanatory, and probably would have all been covered within point two. However, because this is an SEO blog, I will labor the point. Making sure XML sitemaps, canonical tags, HREF LANG and sitemap references within the robots.txt are updated to point to HTTPS is very important. Failure to do so will double the number of requests Googlebot makes to your website, wasting crawl budget on inaccessible pages, taking focus away from areas of your site you want Googlebot to see. 9. Add HTTPS versions to Google Search Console and update disavow file and any URL parameter settingsThis is another common error we see. Google Search Console (GSC) is a brilliant free tool which every webmaster should be using, but importantly, it only works on a subdomain level. This means if you migrate to HTTPS and you don’t set up a new account to reflect this, the information within your GSC account will not reflect your live site. This can be massively exacerbated should you have previously had a toxic backlink profile which required a disavow file. Guess what? If you don’t set up a HTTPS GSC profile and upload your disavow file to it, the new subdomain will be vulnerable. Similarly, if you have a significant amount of parameters on your site which Googlebot struggles to crawl, unless you set up parameter settings in your new GSC account, this site will be susceptible to crawl inefficiencies and indexation bloat. Make sure you set up your GSC account and update all the information accordingly. 10. Change default URL in GA & Update social accounts, paid media, email, etc.Finally, you’ll need to go through and update any references to your website on any apps, social media and email providers to ensure users are not unnecessarily redirected. It does go without saying that any migration should be done within a test environment first, allowing any potential bugs to be resolved in a non user-facing environment. At Zazzle Media, we have found that websites with the most success in migrating to HTTPS are the ones who follow a methodological approach to ensure all risks have been tested and resolved prior to full rollout of changes. Make sure you follow the steps in this guide systematically, and don’t cut corners; you’ll reap the rewards in the form of a more secure website, better user trust, and an improved ranking signal to boot. from https://searchenginewatch.com/2018/02/26/migrating-http-to-https-a-guide/ What a useless article! Anyone worth their salt in the SEO industry knows that a blinkered focus on keywords in 2018 is a recipe for disaster. Sure, I couldn’t agree with you more, but when you dive into the subject it uncovers some interesting issues. If you work in the industry you will no doubt have had the conversation with someone who knows nothing about SEO, who subsequently says something along the lines of: “SEO? That’s search engine optimization. It’s where you put your keywords on your website, right?” Extended dramatic sigh. Potentially a hint of aloof eye rolling. It is worth noting that when we mention ‘keywords’ we are referring to exact match keywords, usually of the short tail variety and often high-priority transactional keywords. To set the scene, I thought it would be useful to sketch out a polarized situation: Side one:Include your target keyword as many times as possible in your content. Google loves the keywords*. Watch your website languish in mid table obscurity and scratch your head wondering why it ain’t working, it all seemed so simple. (*not really) Side two:You understand that Google is smarter than just counting the amount of keywords that exactly match a search. So you write for the user…..creatively, with almost excessive flair. Your content is renowned for its cryptic and subconscious messaging. It’s so subconscious that a machine doesn’t have a clue what you’re talking about. Replicate results for Side One. Cue similar head scratching. Let’s start with side one. White Hat (and successful) SEO is not about ‘gaming’ Google, or other search engines for that matter. You have to give Doc Brown a call and hop in the DeLorean back to the early 2000s if that’s the environment you’re after. Search engines are focused on providing the most relevant and valuable results for their users. As a by product they have, and are, actively shutting down opportunities for SEOs to manipulate the search results through underhanded tactics. What are underhanded tactics? I define them by tactics that don’t provide value to the user; they are only employed to manipulate the search results. Here’s why purely focusing on keywords is outdatedSimply put, Google’s search algorithm is more advanced than counting the amount of keyword matches on a page. They’re more advanced than assessing keyword density as well. Their voracious digital Panda was the first really famous update to highlight to the industry that they would not accept keyword stuffing. Panda was the first, but certainly not the last. Since 2011 there have been multiple updates that have herded the industry away from the dark days of keyword stuffing to the concept of user-centric content. I won’t go into heavy detail on each one, but have included links to more information if you so desire: Hummingbird, Latent Semantic Indexing and Semantic SearchGoogle understands synonyms; that was relatively easy for them to do. They didn’t stop there, though. Hummingbird helps them to understand the real meaning behind a search term instead of the keywords or synonyms involved in the search. RankBrainSupposedly one of the three most important ranking factors for Google. RankBrain is machine learning that helps Google, once again, understand the true intent behind a search term. All of the above factors have led to an industry that is focused more on the complete search term and satisfying the user intent behind the search term as opposed to focusing purely on the target keyword. As a starting point, content should always be written for the user first. Focus on task completion for the user, or as Moz described in their White Board Friday ‘Search Task Accomplishment’. Keywords (or search terms) and associated phrases can be included later if necessary, more on this below. Writing user-centric content pays homage to more than just the concept of ranking for keywords. For a lot of us, we want the user to complete an action, or at the very least return to our website in the future. Even if keyword stuffing worked (it doesn’t), you might get more traffic but would struggle to convert your visitors due to the poor quality of your content. So should we completely ignore keywords?Well, no, and that’s not me backtracking. All of the above advice is legitimate. The problem is that it just isn’t that simple. The first point to make is that if your content is user centric, your keyword (and related phrases) will more than likely occur naturally. You may have to play a bit of a balancing act to make sure that you don’t up on ‘Side Two’ mentioned at the beginning of this article. Google is a very clever algorithm, but in the end it is still a machine. If your content is a bit too weird and wonderful, it can have a negative impact on your ability to attract the appropriate traffic due to the fact that it is simply too complex for Google to understand which search terms to rank your website for. This balancing act can take time and experience. You don’t want to include keywords for the sake of it, but you don’t want to make Google’s life overly hard. Experiment, analyse, iterate. Other considerations for this more ‘cryptic’ content is how it is applied to your page and its effect on user experience. Let’s look at a couple of examples below: MetadataSure, more clickbait-y titles and descriptions may help attract a higher CTR, but don’t underestimate the power of highlighted keywords in your metadata in SERPs. If a user searches for a particular search term, on a basic level they are going to want to see this replicated in the SERPs. Delivery to the userIn the same way that you don’t want to make Google’s life overly difficult, you also want to deliver your message as quickly as possible to the user. If your website doesn’t display content relevant to the user’s search term, you run the risk of them bouncing. This, of course, can differ between industries and according to the layout/design of your page. Keywords or no keywords?To sum up, SEO is far more complex than keywords. Focusing on satisfying user intent will produce far greater results for your SEO in 2018, rather than a focus on keywords. You need to pay homage to the ‘balancing act’, but if you follow the correct user-centric processes, this should be a relatively simple task. Are keywords still relevant in 2018? They can be helpful in small doses and with strategic inclusion, but there are more powerful factors out there. from https://searchenginewatch.com/2018/02/26/are-keywords-still-relevant-to-seo-in-2018/ “Hey Siri, what is the cost of an iPad near me?” In today’s internet, a number of specialist search engines exist to help consumers search for and compare things within a specific niche. As well as search engines like Google and Bing which crawl the entire web, we have powerful vertical-specific search engines like Skyscanner, Moneysupermarket and Indeed that specialize in surfacing flights, insurance quotes, jobs, and more. Powerful though web search engines can be, they aren’t capable of delivering the same level of dedicated coverage within a particular industry that vertical search engines are. As a result, many vertical-specific search engines have become go-to destinations for finding a particular type of information – above and beyond even the all-powerful Google. Yet until recently, one major market remained unsearchable: prices. If you ask Siri to tell you the cost of an iPad near you, she won’t be able to provide you with an answer, because she doesn’t have the data. Until now, a complete view of prices on the internet has never existed. Enter Pricesearcher, a search engine that has set out to solve this problem by indexing all of the world’s prices. Pricesearcher provides searchers with detailed information on products, prices, price histories, payment and delivery information, as well as reviews and buyers’ guides to aid in making a purchase decision. Founder and CEO Samuel Dean calls Pricesearcher “The biggest search engine you’ve never heard of.” Search Engine Watch recently paid a visit to the Pricesearcher offices to find about the story behind the first search engine for prices, the technical challenge of indexing prices, and why the future of search is vertical. Pricesearcher: The early daysA product specialist by background, Samuel Dean spent 16 years in the world of ecommerce. He previously held a senior role at eBay as Head of Distributed Ecommerce, and has carried out contract work for companies including Powa Technologies, Inviqa and the UK government department UK Trade & Investment (UKTI). He first began developing the idea for Pricesearcher in 2011, purchasing the domain Pricesearcher.com in the same year. However, it would be some years before Dean began work on Pricesearcher full-time. Instead, he spent the next few years taking advantage of his ecommerce connections to research the market and understand the challenges he might encounter with the project. “My career in e-commerce was going great, so I spent my time talking to retailers, speaking with advisors – speaking to as many people as possible that I could access,” explains Dean. “I wanted to do this without pressure, so I gave myself the time to formulate the plan whilst juggling contracting and raising my kids.” More than this, Dean wanted to make sure that he took the time to get Pricesearcher absolutely right. “We knew we had something that could be big,” he says. “And if you’re going to put your name on a vertical, you take responsibility for it.” Dean describes himself as a “fan of directories”, relating how he used to pore over the Yellow Pages telephone directory as a child. His childhood also provided the inspiration for Pricesearcher in that his family had very little money while he was growing up, and so they needed to make absolutely sure they got the best price for everything. Dean wanted to build Pricesearcher to be the tool that his family had needed – a way to know the exact cost of products at a glance, and easily find the cheapest option. “The world of technology is so advanced – we have self-driving cars and rockets to Mars, yet the act of finding a single price for something across all locations is so laborious. Which I think is ridiculous,” he explains. Despite how long it took to bring Pricesearcher to inception, Dean wasn’t worried that someone else would launch a competitor search engine before him. “Technically, it’s a huge challenge,” he says – and one that very few people have been willing to tackle. There is a significant lack of standardization in the ecommerce space, in the way that retailers list their products, the format that they present them in, and even the barcodes that they use. But rather than solve this by implementing strict formatting requirements for retailers to list their products, making them do the hard work of being present on Pricesearcher (as Google and Amazon do), Pricesearcher was more than willing to come to the retailers. “Our technological goal was to make listing products on Pricesearcher as easy as uploading photos to Facebook,” says Dean. As a result, most of the early days of Pricesearcher were devoted to solving these technical challenges for retailers, and standardizing everything as much as possible. In 2014, Dean found his first collaborator to work with him on the project: Raja Akhtar, a PHP developer working on a range of ecommerce projects, who came on board as Pricesearcher’s Head of Web Development. Dean found Akhtar through the freelance website People Per Hour, and the two began working on Pricesearcher together in their spare time, putting together the first lines of code in 2015. The beta version of Pricesearcher launched the following year. For the first few years, Pricesearcher operated on a shoestring budget, funded entirely out of Dean’s own pocket. However, this didn’t mean that there was any compromise in quality. “We had to build it like we had much more funding than we did,” says Dean. They focused on making the user experience natural, and on building a tool that could process any retailer product feed regardless of format. Dean knew that Pricesearcher had to be the best product it could possibly be in order to be able to compete in the same industry as the likes of Google. “Google has set the bar for search – you have to be at least as good, or be irrelevant,” he says. PriceBot and price dataPricesearcher initially built up its index by directly processing product feeds from retailers. Some early retail partners who joined the search engine in its first year included Amazon, Argos, IKEA, JD Sports, Currys and Mothercare. (As a UK-based search engine, Pricesearcher has primarily focused on indexing UK retailers, but plans to expand more internationally in the near future). In the early days, indexing products with Pricesearcher was a fairly lengthy process, taking about 5 hours per product feed. Dean and Akhtar knew that they needed to scale things up dramatically, and in 2015 began working with a freelance dev ops engineer, Vlassios Rizopoulos, to do just that. Rizopolous’ work sped up the process of indexing a product feed from 5 hours to around half an hour, and then to under a minute. In 2017 Rizopolous joined the company as its CTO, and in the same year launched Pricesearcher’s search crawler, PriceBot. This opened up a wealth of additional opportunities for Pricesearcher, as the bot was able to crawl any retailers who didn’t come to them directly, and from there, start a conversation. “We’re open about crawling websites with PriceBot,” says Dean. “Retailers can choose to block the bot if they want to, or submit a feed to us instead.” For Pricesearcher, product feeds are preferable to crawl data, but PriceBot provides an option for retailers who don’t have the technical resources to submit a product feed, as well as opening up additional business opportunities. PriceBot crawls the web daily to get data, and many retailers have requested that PriceBot crawl them more frequently in order to get the most up-to-date prices. Between the accelerated processing speed and the additional opportunities opened up by PriceBot, Pricesearcher’s index went from 4 million products in late 2016 to 500 million in August 2017, and now numbers more than 1.1 billion products. Pricesearcher is currently processing 2,500 UK retailers through PriceBot, and another 4,000 using product feeds. All of this gives Pricesearcher access to more pricing data than has ever been accumulated in one place – Dean is proud to state that Pricesearcher has even more data at its disposal than eBay. The data set is unique, as no-one else has set out to accumulate this kind of data about pricing, and the possible insights and applications are endless. At Brighton SEO in September 2017, Dean and Rizopolous gave a presentation entitled, ‘What we have learnt from indexing over half a billion products’, presenting data insights from Pricesearcher’s initial 500 million product listings. The insights are fascinating for both retailers and consumers: for example, Pricesearcher found that the average length of a product title was 48 characters (including spaces), with product descriptions averaging 522 characters, or 90 words.

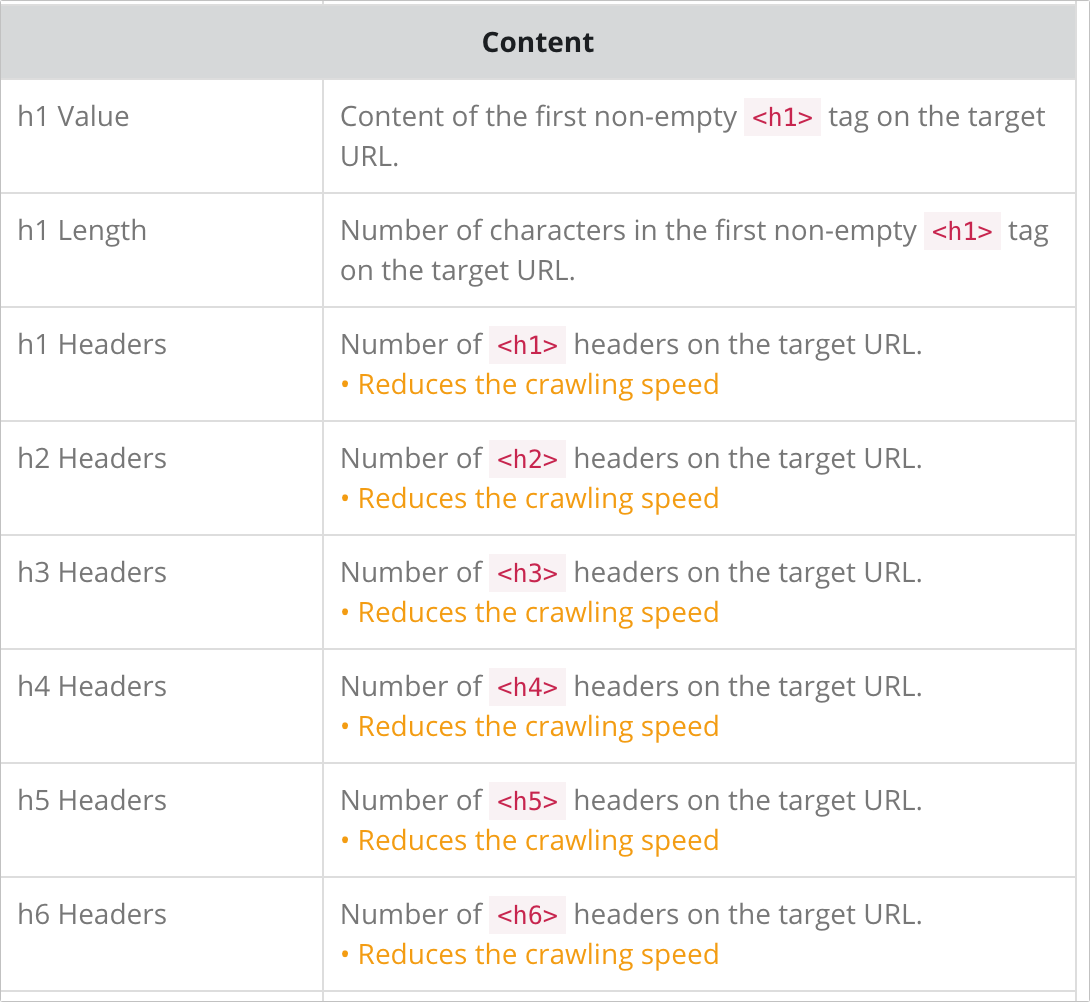

Less than half of the products indexed – 44.9% – included shipping costs as an additional field, and two-fifths of products (40.2%) did not provide dimensions such as size and color. Between December 2016 and September 2017, Pricesearcher also recorded 4 billion price changes globally, with the UK ranking top as the country with the most price changes – one every six days. It isn’t just Pricesearcher who have visibility over this data – users of the search engine can benefit from it, too. On February 2nd, Pricesearcher launched a new beta feed which displays a pricing history graph next to each product. This allows consumers to see exactly what the price of a product has been throughout its history – every rise, every discount – and use this to make a judgement about when the best time is to buy. “The product history data levels the playing field for retailers,” explains Dean. “Retailers want their customers to know when they have a sale on. This way, any retailer who offers a good price can let consumers know about it – not just the big names. “And again, no-one else has this kind of data.” As well as giving visibility over pricing changes and history, Pricesearcher provides several other useful functions for shoppers, including the ability to filter by whether a seller accepts PayPal, delivery information and a returns link. This is, of course, if retailers make this information available to be featured on Pricesearcher. The data from Pricesearcher’s initial 500 million products shed light on many areas where crucial information was missing from a product listing, which can negatively impact a retailer’s visibility on the search engine. Like all search engines, Pricesearcher has ranking algorithms, and there are certain steps that retailers can take to optimize for Pricesearcher, and give themselves the best chance of a high ranking. With that in mind, how does ‘Pricesearcher SEO’ work? How to rank on PricesearcherAt this stage in its development, Pricesearcher wants to remove the mystery around how retailers can rank well on its search engine. Pricesearcher’s Retail Webmaster and Head of Search, Paul Lovell, is currently focused on developing ranking factors for Pricesearcher, and conceptualizing an ideal product feed. The team are also working with select SEO agencies to educate them on what a good product feed looks like, and educating retailers about how they can improve their product listings to aid their Pricesearcher ranking. Retailers can choose to either go down the route of optimizing their product feed for Pricesearcher and submitting that, or optimizing their website for the crawler. In the latter case, only a website’s product pages are of interest to Pricesearcher, so optimizing for Pricesearcher translates into optimizing product pages to make sure all of the important information is present.

At the most basic level, retailers need to have the following fields in order to rank on Pricesearcher: A brand, a detailed product title, and a product description. Category-level information (e.g. garden furniture) also needs to be present – Pricesearcher’s data from its initial 500 million products found that category-level information was not provided in 7.9% of cases. If retailers submit location data as well, Pricesearcher can list results that are local to the user. Additional fields that can help retailers rank are product quantity, delivery charges, and time to deliver – in short, the more data, the better. A lot of ‘regular’ search engine optimization tactics also work for Pricesearcher – for example, implementing schema.org markup is very beneficial in communicating to the crawler which fields are relevant to it. It’s not only retailers who can rank on Pricesearcher; retail-relevant webpages like reviews and buying guides are also featured on the search engine. Pricesearcher’s goal is to provide people with as much information as possible to make a purchase decision, but that decision doesn’t need to be made on Pricesearcher – ultimately, converting a customer is seen as the retailer’s job. Given Pricesearcher’s role as a facilitator of online purchases, an affiliate model where the search engine earns a commission for every customer it refers who ends up converting seems like a natural way to make money. Smaller search engines like DuckDuckGo have similar models in place to drive revenue. However, Dean is adamant that this would undermine the neutrality of Pricesearcher, as there would then be an incentive for the search engine to promote results from retailers who had an affiliate model in place. Instead, Pricesearcher is working on building a PPC model for launch in 2019. The search engine is planning to offer intent-based PPC to retailers, which would allow them to opt in to find out about returning customers, and serve an offer to customers who return and show interest in a product. Other than PPC, what else is on the Pricesearcher roadmap for the next few years? In a word: lots. The future of search is verticalThe first phase of Pricesearcher’s journey was all about data acquisition – partnering with retailers, indexing product feeds, and crawling websites. Now, the team are shifting their focus to data science, applying AI and machine learning to Pricesearcher’s vast dataset. Head of Search Paul Lovell is an analytics expert, and the team are recruiting additional data scientists to work on Pricesearcher, creating training data that will teach machine learning algorithms how to process the dataset. “It’s easy to deploy AI too soon,” says Dean, “but you need to make sure you develop a strong baseline first, so that’s what we’re doing.” Pricesearcher will be out of beta by December of this year, by which time the team intend to have all of the prices in the UK (yes, all of them!) listed in Pricesearcher’s index. After the search engine is fully launched, the team will be able to learn from user search volume and use that to refine the search engine. The Pricesearcher rocket ship – founder Samuel Dean built this by hand to represent the Pricesearcher mission. It references a comment made by Eric Shmidt to Sheryl Sandberg when she interviewed at Google. When she told him that the role didn’t meet any of her criteria and asked why should she work there, he replied: “If you’re offered a seat on a rocket ship, don’t ask what seat. Just get on.” At the moment, Pricesearcher is still a well-kept secret, although retailers are letting people know that they’re listed on Pricesearcher, and the search engine receives around 1 million organic searches on a monthly basis, with an average of 4.5 searches carried out per user. Voice and visual search are both on the Pricesearcher roadmap; voice is likely to arrive first, as a lot of APIs for voice search are already in place that allow search engines to provide their data to the likes of Alexa, Siri and Cortana. However, Pricesearcher are also keen to hop on the visual search bandwagon as Google Lens and Pinterest Lens gain traction. Going forward, Dean is extremely confident about the game-changing potential of Pricesearcher, and moreover, believes that the future of the industry lies in vertical search. He points out that in December 2016, Google’s parent company Alphabet specifically identified vertical search as one of the biggest threats to Google. “We already carry out ‘specialist searches’ in our offline world, by talking to people who are experts in their particular field,” says Dean. “We should live in a world of vertical search – and I think we’ll see many more specialist search engines in the future.” from https://searchenginewatch.com/2018/02/23/pricesearcher-the-biggest-search-engine-youve-never-heard-of/ As an SEO professional, your role will invariably lead you to interactions with people in a wide variety of roles including business owners, marketing managers, content creators, link builders, PR agencies, and developers. That last one – developers – is a catch-all term that can encompass software engineers, coders, programmers, front- and back-end developers, and IT professionals of various types. These are the folks who write the code and/or generally manage the underlying various web technologies that comprise and power websites. In your role as an SEO, it may or may not be practicable for you to completely master programming languages such as C++ and Java, or scripting languages such as PHP and JavaScript, or markup languages such as HTML, XML, or the stylesheet language CSS. And, there are many more programming, scripting, and markup languages out there – it would be a Herculean task to be a master of every kind of language, even if your role is full-time programmer and not SEO. But, it is essential for you, as an SEO professional, to understand the various languages and technologies and technology stacks out there that comprise the web. When you’re making SEO recommendations, which developers will most likely be executing, you need to understand their mindset, their pain points, what their job is like – and you need to be able to speak their language. You don’t have to know everything developers know, but you should have a good grasp of what developers do so that you can ask better questions and provide SEO recommendations in a way that resonates with them, and those recommendations are more likely to be executed as a result. When you speak their language, and understand what their world is like, you’re contributing to a collaborative environment where everyone’s pulling on the same side of the rope for the same positive outcomes. And of course, aside from building collaborative relationships, being a professional SEO involves a lot of technical detective work and problem detection and prevention, so understanding various aspects of web technology is not optional; it’s mandatory. Web tech can be complex and intimidating, but hopefully this guide will help make things a little easier for you and fill in some blanks in your understanding. Let’s jump right in! The internet vs. the World Wide WebMost people use these terms interchangeably, but technically the two terms do not mean the same thing, although they are related. The Internet began as a decentralized network of independent interconnected computers. The US Department of Defense was involved over time and awarded contracts, including for the development of the ARPANET (Advanced Research Projects Agency Network) project, which was an early packet switching network and first to use TCP/IP (Transmission Control Protocol and Internet Protocol). The ARPANET project led to “internetworking” where various networks of computers could be joined into a larger “network of networks”. The development of the World Wide Web is credited to British computer scientist Sir Tim Beners-Lee in the 1980s; he developed linking hypertext documents, which resulted in an information-sharing model built “on top” of the Internet. Documents (web pages) were specified to be formatted in a markup language called “HTML” (Hypertext Markup Language), and could be linked to each other using “hyperlinks” that users could click to navigate to other web pages. Further reading: Web hostingWeb hosting, or hosting for short, are services that allow people and businesses to put a web page or a website on the internet. Hosting companies have banks of computers called “servers” that are not entirely dissimilar in nature to computers you’re already familiar with, but of course there are differences. There are various types of web hosting companies that offer a range of services in addition to web hosting; such services may include domain name registration, website builders, email addresses, website security services, and more. In short, a host is where websites are published. Further reading: Web serversA web server is a computer that stores web documents and resources. Web servers receive requests from clients (browsers) for web pages, images, etc. When you visit a web page, your browser requests all the resources/files needed to render that web page in your browser. It goes something like this: Client (browser) to server: “Hey, I want this web page, please provide all the text, images and other stuff you have for that page.” Server to client: “Okay, here it is.” Various factors impact how quickly the web page will display (render) including the speed of the server and the size(s) of the various files being requested. There are three server types you’ll most often encounter:

Further reading: Server log filesOften shortened to “log files”, these are records of sever activity in response to requests made for web pages and associated resources such as images. Some servers may already be configured to record this activity, others will need to be configured to do so. Log files are the “reality” of what’s happening with a website and will include information such as the page or file requested, date and time stamp of the request, the user agent making the request, the response type (found, error, redirected, etc.), the referrer, and a few other items such as bytes served and client IP address. SEOs should get familiar with parsing log files. To go into this topic in more detail, read JafSoft’s explanation of a web server log file sample. FTPFTP stands for File Transfer Protocol, and it’s how you upload resource files such as webpages, images, XML Sitemaps, robots.txt files, and PDF files to your web hosting account to make these resource files available and viewable on the Web via browsers. There are free FTP software programs you can use for this purpose. The interface is a familiar file-folder tree structure where you’ll see your local machine’s files on the left, and the remote server’s files on the right. You can drag and drop local files to the server to upload. Voila, you’ve put files onto the internet! For more detail, Wired has an excellent guide on FTP for beginners. Domain nameA domain name is a string of (usually) text and is used in a URL (Uniform Resource Locator). Keeping this simple, for the URL https://www.website.com, “website” is the domain name. For more detail, check out the Wikipedia article on domain names. Root domain & subdomainA root domain is what we commonly think of as a domain name such as “website” in the URL https://www.website.com. A subdomain is the www. part of the URL. Other examples of subdomains would be news.website.com, products.website.com, support.website.com and so on. For more information on the difference between a domain and a subdomain, check out this video from HowTech. URL vs. URIURL stands for “Universal Resource Locator” (such as https://www.website.com/this-is-a-page) and URI stands for “Uniform Resource Identifier” and is a subset of a full URL (such as /this-is-a-page.html). More info here. HTML, CSS, and JavaScriptI’ve grouped together HTML, CSS, and JavaScript here not because each don’t deserve their own section here, but because it’s good for SEOs to understand that those three languages are what comprise much of how modern web pages are coded (with many exceptions of course, and some of those will be noted elsewhere here). HTML stands for “Hypertext Markup Language”, and it’s the original and foundational language of web pages on the World Wide Web. CSS stands for “Cascading Style Sheets” and is a style sheet language used to style and position HTML elements on a web page, enabling separation of presentation and content. JavaScript (not to be confused with the programming language “Java”) is a client-side scripting language to create interactive features on web pages. Further reading: AJAX & XMLAJAX stands for “Asynchronous JavaScript And XML. Asynchronous means the client/browser and the server can work and communicate independently allowing the user to continue interaction with the web page independent of what’s happening on the server. JavaScript is used to make the asynchronous server requests and when the server responds JavaScript modifies the page content displayed to the user. Data sent asynchronously from the server to the client is packaged in an XML format, so it can be easily processed by JavaScript. This reduces the traffic between the client and the server which increases response time and speed. XML stands for “Extensible Markup Language” and is similar to HMTL using tags, elements, and attributes and was designed to both store and transport data, whereas HTML is used to display data. For the purposes of SEO, the most common usage of XML is in XML Sitemap files. Structured data (AKA, Schema.org)Structured data is markup you can add to the HTML of a page to help search engines better understand the content of the page, or at least certain elements of that page. By using the approved standard formats, you provide additional information that makes it easier for search engines to parse the pertinent data on the page. Common uses of structured data are to markup certain aspects of recipes, literary works, products, places, events of various types, and much more. Schema.org was launched on June 2, 2011, as a collaborative effort by Google, Bing and Yahoo (soon after joined by Yandex) to create a common set of agreed-upon and standardized set of schemas for structured data markup on web pages. Since then, the term “Schema.org” has become synonymous with the term “structured data”, and Schema.org structured data types are continually evolving with new types being added with relative frequency. One of the main takeaways about structured data is that it helps disambiguate data for search engines so they can more easily understand information and data, and that certain marked-up elements may result in additional information being displayed in Search Engines Results Pages (SERPs), such as review stars, recipe cooking times, and so on. Note that adding structured data is not a guarantee of such SERP features. There are a number of structured data vocabularies that exist, but JSON-LD (JavaScript Object Notation for Linked Data) has emerged as Google’s preferred and recommended method of doing structured data markup per the Schema.org guidelines, but other formats are also supported such as microdata and RDFa. JSON-LD is easier to add to pages, easier to maintain and change, and less prone to errors than microdata which must be wrapped around existing HML elements, whereas JSON-LD can be added as a single block in the HTML head section of a web page. Here is the Schema.org FAQ page for further investigation – and to get started using microdata, RDFa and JSON-LD, check out our complete beginner’s guide to Schema.org markup. Front-end vs. back-end, client-side vs. server-sideYou may have talked to a developer who said, “I’m a front-end developer” and wondered what that meant. Of you may have heard someone say “oh, that’s a back-end functionality”. It can seem confusing what all this means, but it’s easily clarified. “Front-end” and “client-side” both mean the same thing: it happens (executes) in the browser. For example, JavaScript was originally developed as something that executed on a web page in the browser, and that means without having to make a call to the server. “Back-end” and “server-side” both mean the same thing: it happens (executes) on a server. For example, PHP is a server-side scripting language that executes on the server, not in the browser. Some Content Management Systems (CMS for short) like WordPress use PHP-based templates for web pages, and the content is called from the server to display in the browser. Programming vs. scripting languagesEngineers and developers do have differing explanations and definitions of terms. Some will say ultimately there’s no differences or that the lines are blurry, but the generally accepted difference between a programming language (like C or Pascal) vs. a scripting language (like JavaScript or PHP) is that a programming language requires an explicit compiling step, whereas human-created, human-readable code is turned into a specific set of machine-language instructions understandable by a computer. Content Management System (CMS)A CMS is a software application or a set of related programs used to create and manage websites (or we can use the fancy term “digital content”). At the core, you can use a CMS to create, edit, publish, and archive web pages, blog posts, and articles and will typically have various built-in features. Using a CMS to create a website means that there is no need to create any code from scratch, which is one of the main reasons CMS’ have broad appeal. Another common aspect of CMS’ are plugins, which can be integrated with the core CMS to extend functionalities which are not part of the core CMS feature list. Common CMS’ include WordPress, Drupal, Joomla, ExpressionEngine, Magento, WooCommerce, Shopify, Squarespace, and there are many, many others. Read more here about Content Management Systems. Content Delivery Network (CDN)Sometimes called a “Content Distribution Network”, CDNs are large networks of servers which are geographically dispersed with the goal of serving web content from a server location closer to the client making the request in order to reduce latency (transfer delay). CDNs cache copies of your web content across these servers, and then servers nearest to the website visitor serve the requested web content. CDNs are used to provide high availability along with high performance. More info here. HTTPS, SSL, and TLSWeb data is passed between computers via data packets of code. Clients (web browsers) serve as the user interface when we request a web page from a server. HTTP (hypertext transfer protocol) is the communication method a browser uses to “talk to” a server and make requests. HTTPS is the secure version of this (hypertext transfer protocol secure). Website owners can switch their website to HTTPS to make the connection with users more secure and less prone to “man in the middle attacks” where a third party intercepts or possibly alters the communication. SSL refers to “secure sockets layer” and is a standard security protocol to establish communication encryption between the server and the browser. TLS, Transport Layer Security, is a more-recent version of SSL HTTP/1.1 & HTTP/2When Tim Berners-Lee invented the HTTP protocol in 1989, the computer he used did not have the processing power and memory of today’s computers. A client (browser) connecting to a server using HTTP/1.1 receives information in a sequence of network request-response transactions, which are often referred to as “round trips” to the server, sometimes called “handshakes”. Each round trip takes time, and HTTPS is an HTTP connection with SSL/TSL layered in which requires yet-another handshake with the server. All of this takes time, causing latency. What was fast enough then is not necessarily fast enough now. HTTP/2 is the first new version of HTTP since 1.1. Simply put, HTTP/2 allows the server to deliver more resources to the client/browser faster than HTTP/1.1 by utilizing multiplexing, compression, request prioritization, and server push which allows the server to send resources to the client that have not yet been requested. Further reading: Application Programming Interface (API)Application is a general term that, simply put, refers to a type of software that can perform specific tasks. Applications include software, web browsers, and databases. An API is an interface with an application, typically a database. The API is like a messenger that takes requests, tells the system what you want, and returns the response back to you. If you’re in a restaurant and want the kitchen to make you a certain dish, the waiter who takes your order is the messenger that communicates between you and the kitchen, which is analogous to using an API to request and retrieve information from a database. For more info, check out Wikipedia’s Application programming interface page. AMP, PWA, and SPAIf you want to build a website today, you have many choices. You can build it from scratch using HTML for content delivery along with CSS for look and feel and JavaScript for interactive elements. Or you could use a CMS (content management system) like WordPress, Magento, or Drupal. Or you could build it with AMP, PWA, or SPA. AMP stands for Accelerated Mobile Pages and is an open source Google initiative which is a specified set of HTML tags and various functionality components which are ever-evolving. The upside to AMP is lightning-fast loading web pages when coded according to AMP specifications, the downside is some desired features may not be currently supported, and issues with proper analytics tracking. Further reading:

PWA stands for Progressive Web App, and it blends the best of both worlds between traditional websites and mobile phone apps. PWAs deliver a native app-like experience to users such as push notifications, the ability to work offline, and create a start icon on your mobile phone. By using “service workers” to communicate between the client and server, PWAs combines fast-loading web pages with the ability to act like a native mobile phone app at the same time. However, because PWAs are JavaScript frameworks, you may encounter a number of technical challenges. Further reading: